I’ve been thinking a lot lately about how information retrieval is fundamentally shifting for AI agents.

If you look at the trenches of AI engineering right now, the data layer is a bit of a mess. For the last couple of years, the industry has been running on naive RAG. You take your documents, slice them into chunks, push them through a frozen embedding model like text-embedding-3, dump them into a vector database, and perform a cosine similarity search whenever a user asks a question.

That worked, until agents got smarter and context windows exploded.

Today, an agent typically has a suite of retrieval tools and decides which ones to invoke based on the incoming query. A RAG pipeline is no longer the whole solution; it’s just one tool in the kit, sitting alongside BM25 text indices, web search, or direct API calls.

When I map this to a traditional search pipeline, two major shifts stand out:

- The shift in Query Understanding: In early RAG, we pushed query understanding into the vector store itself. It would return the top-k similar candidates, and the generative model would act as a summarizer.

- The rise of Iterative Retrieval: In modern agentic retrieval (especially since the release of models like Claude Sonnet 4.5, Sept ‘25), the model handles the query understanding upfront. It decomposes the intent and orchestrates multiple retrieval calls across various systems. The results are then reranked together. This process is often iterative: the agent judges the retrieved results and decides whether it needs to go back for more. While this is slower and higher-latency, it acts as the ultimate reranker. If the goal is the right answer, the agentic loop is hard to beat.

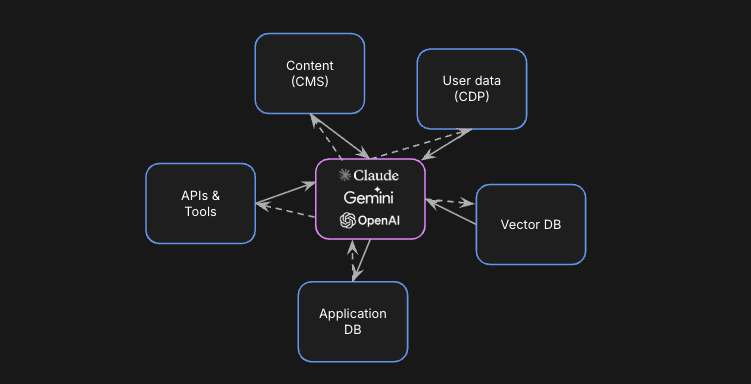

Because of this shift, we’re seeing a demand for a highly heterogeneous data layer. Vector stores were supposed to be the “long-term memory” for AI, but it turns out semantic understanding is just one component of the stack.

In fact, grep is arguably the dominant retrieval system right now. If you look at the internals of Cursor or Claude Code, they rely heavily on grep for codebase retrieval. This makes perfect sense: code is inherently lexical. When you need to find a specific variable, function name, or file path, exact string matching is exactly what you want. Coupled with a Language Server Protocol for finding references and traversing code structure, even for massive codebases, code search agents don’t get as much from semantic indexes.

So why is grep winning? It’s not necessarily that it’s better than a vector store, it’s just frictionless. For local file use cases, it requires no indexing and thrives on simplicity. Furthermore, if we are honest about the long tail of retrieval use-cases, they aren’t enterprise-scale document lakes. A massive portion of agentic memory is actually just small sets of markdown files, like a local Claude Code setup or an OpenAPI spec. For these use cases, raw grep is perfectly sufficient. It’s a portable tool that any agent with CLI access can use. It is the ultimate retrieval starting point.

Setting up a traditional vector database for an agentic application is an operations nightmare. To get even a simple “memory” working, an engineer has to:

- Choose an embedding model: Weighing the latency of local models vs. the cost and rate limits of API-based ones.

- Design a chunking strategy: Making arbitrary, brittle decisions about character counts, recursive headers, and overlap percentages.

- Build a sync pipeline: Ensuring that every time a file changes in a GitHub repo or a Slack message is sent, the vector store updates without creating duplicates or stale indices.

grep sidesteps all of this. It’s deterministic, instant, and, with 1M+ token context windows, you can just dump the raw results in and let the model’s attention mechanism do the heavy lifting.

That all being said, raw grep obviously isn’t a silver bullet. Autonomous agents frequently write bad regex or accidentally search through massive node_modules folders, blowing out their context window with garbage. Furthermore, relying on massive context windows is expensive and slow. Forcing a frontier model to parse 500,000 tokens of raw file output iteratively will burn through your API credits and spike your latency, while increasing the risk of “lost in the middle” phenomena.

This is where advanced retrieval steps back in. Pruning a search space down to highly relevant, targeted pieces of data using a vector store or search engine remains crucial for cost, scale, and latency control.

Agents Should Be Data Architects

Right now, we are comfortable with agents operating at the query layer, an engineer sets up BM25, a vector store, and AST tools, and the agent simply routes between them. But this still relies on a human making static, upfront decisions about the data infrastructure.

I’m a big believer in self-replicating software: the idea that agents shouldn’t just use software to solve tasks; they should build the software required to solve them. When you apply this perspective to agentic retrieval, the logical conclusion is that agents will eventually be the ones choosing, provisioning, and maintaining their own data infrastructure.

Historically, humans choose one data infra, index the data once, and the system is locked in. It is fundamentally stateless. But if you give an agent a sandbox where it is responsible for solving the retrieval problem end-to-end, the data infrastructure becomes stateful and highly dynamic.

Imagine an agent dropped into a massive new codebase. For the first few queries, it might just use grep. But as it works, it notices it’s repeatedly searching for relational dependencies that are eating up its context window. Instead of throwing an error or blindly continuing to grep, the agent writes a script to parse the AST, extracts the relationships, and spins up a temporary knowledge graph in its sandbox. Or, if it gets asked broad conceptual questions about a repository’s documentation, it might autonomously decide to embed those specific markdown files and spin up a lightweight local vector index.

In this world, the index is no longer a static artifact. Every query that comes in defines how the internal data system is improved. The agent uses its knowledge of previous queries, and the bottlenecks it faced, to continuously refine how data is stored for subsequent queries.

Information retrieval is moving from a static pipeline to a dynamic decision tree, where the agent builds and provisions the right tool on the fly based on the data:

| When the agent sees… | It reaches for… | Because |

|---|---|---|

| Local files or a few markdown notes | grep | No indexing, instant, deterministic |

| Code references & call sites | AST / LSP index | Beats fuzzy search for code |

| Exact-term recall over many docs | BM25 | Keyword recall at scale |

| Fuzzy, conceptual questions | Vector DB (Pinecone, Weaviate) | Semantic similarity |

| Multi-hop entity relationships | Knowledge graph (Neo4j) | Relational traversal |

Just as human engineers graduate from grep when a project becomes too vast or complex, agents will graduate too. The only difference is, they’ll be the ones writing the migration.

The Three Layers of Agentic Retrieval Infrastructure

For agents to actually operate as data architects navigating this decision tree, the underlying infrastructure must shift from rigid, pre-configured silos to flexible building blocks.

(Black-box search APIs like Exa and verticalized SaaS like Glean or Algolia sit outside this picture — they’re complete endpoints, not the infra primitives an agent composes into its own memory.)

Three layers, but not a strict stack: two data tiers at different scales — local ephemeral sandboxes and their distributed specialized equivalents — and a control plane that sits above both and can reach into either, depending on what the task demands.

1. Ephemeral Sandboxes — the local tier (DuckDB, SQLite, Local AST, in-memory vector DBs). Not every piece of data needs to be committed to permanent storage. This layer serves as the agentic equivalent of a scratchpad. When an agent enters a new repository to resolve an issue, it needs the ability to spin up temporary infrastructure via protocols like MCP (Model Context Protocol). It might dump a subset of relational data into DuckDB or build an in-memory vector index of recent logs, execute a series of complex queries for that single session, and simply tear it down when the task is complete. It is high-speed, low-stakes, temporary memory — and it is where most agent sessions start today. This is exactly what coding agents like Claude Code do when they drop into a repo: grep, read, build a local AST, and reason — no index server required.

2. Specialized Storage Primitives — the distributed tier (Pinecone, Elasticsearch, Neo4j). These are, in most cases, the distributed scale-out cousins of the local primitives above: Pinecone is what in-memory FAISS graduates to once a vector index outgrows a single process, Elasticsearch is the distributed counterpart to grep, and Postgres or Supabase is where SQLite ends up once data needs persistence and multi-tenant access. When an agent is tasked with diagnosing an issue by referencing a historical index of every customer support ticket ever resolved, ephemeral sandboxes won’t cut it — it routes the data into these heavy lifters instead. They require strict schema and cluster management, and serve as the “anchor” nodes of an agent’s persistent memory.

3. Unified Orchestration & Routing — the control plane (Shaped, Mem0, unified API gateways). This is the connective tissue of the agentic stack, and the important thing to notice is that it does not sit on top of the distributed tier passing requests down one level at a time. It reaches into both the local sandbox and the distributed store through the same declarative interface, so an agent can query a single-process DuckDB scratchpad and a multi-node Pinecone cluster without caring about the difference. Beneath that interface, it handles the plumbing — keeping heterogeneous databases in sync, routing, and caching — so neither the developer nor the agent has to manually wire together five different databases to execute the decision tree above.

You might be wondering: if the agent is the data architect, shouldn’t it be the orchestration layer?

Yes and no. The agent handles the cognitive orchestration — it makes the architectural decisions about which database to use, when to spin it up, and how to query it. But we don’t want to waste expensive, slow frontier-model compute on the mechanical drudgery of data plumbing, and we really don’t want it touching security.

Specialized stores are just storage; they don’t handle pipelines or access permissioning. If an agent decides to build an index from live data in Shopify, Amplitude, or a secured internal wiki, we don’t want the LLM writing raw OAuth flows, managing SaaS API rate limits, or manually enforcing user-level access controls (RBAC) on every query. Instead, the agent should be able to simply declare “keep this Shopify data synced to this local index for this authorized user,” and the control plane handles the rest — OAuth, rate limits, RBAC, caching, schema evolution — giving the agent the clean, secure API primitives it needs to execute its architecture without getting bogged down in boilerplate integrations.

Building the Agentic Retrieval Plane

If we accept that agents will be the ones making architectural decisions, it fundamentally changes how we design this third layer, the unified data abstraction and ETL routing. Historically, data pipelines were built with human engineers in mind. We have tools like Fivetran or Airbyte, which are heavily reliant on GUI dashboards, static configurations, and rigid schemas. But an autonomous agent doesn’t want to click through a dashboard, and it certainly doesn’t want to be locked into a static pipeline it can’t modify when the data context shifts. So, what does the ideal technology or solution look like for this routing layer? An agent-native data control plane needs to possess a few core characteristics:

1. Declarative, API-First Provisioning. The layer must be entirely controllable via code, ideally integrating seamlessly with standard protocols like MCP. The agent shouldn’t have to write the execution logic. It should be able to pass a JSON payload that essentially says: “Connect to this specific Shopify store, extract the last 30 days of inventory data, apply a recursive markdown chunker, and sink it into my ephemeral DuckDB instance.” The routing layer acts as the compiler for this intent, executing the pipeline securely in the background.

2. Dynamic and Late-Binding Transformations. In a traditional pipeline, chunking strategies and embedding models are decided upfront. But in an agentic world, the agent learns from its queries. The ideal routing layer allows the agent to dynamically inject custom transformation logic into the pipeline on the fly. If the agent notices it is missing critical relationship context in its vector searches, it can ping the routing layer API to update the pipeline: “Re-index these documents, but this time, run them through an LLM extraction step first to pull out key entities, and sink that into Neo4j.”

3. Identity-Aware by Default. Security and permissioning cannot be an afterthought left to the agent. A frontier model cannot be trusted to independently enforce Row-Level Security. The ideal routing layer handles identity as a first-class primitive. When the agent initiates a retrieval query, it passes the user’s token to the routing layer, which acts as a secure proxy. The layer ensures that whether the data is coming from a live Slack API or a cached Pinecone index, the agent only receives the chunks that specific user is authorized to see.

4. Built-in Observability and Feedback Loops. If agents are going to continuously improve their own data infrastructure based on incoming queries, they need telemetry. The routing layer must expose observability metrics back to the agent in a machine-readable format. The agent needs to be able to query the routing layer to ask: “What was the cache hit rate for the last 100 queries?” or “How many tokens am I burning on this specific BM25 index vs the vector store?” This telemetry is the feedback loop that allows the self-replicating software to optimize its own architecture. Ultimately, the ideal routing layer stops treating the agent as just a “user” of a database, and starts treating it like a first-class developer. It provides the heavy industrial machinery — the ETL, the syncs, the auth, the cache — so the agent can focus entirely on being the architect.

Conclusion: Letting Agents Build Their Own Mind

For the last few years, we’ve treated AI memory as a static, human-driven engineering problem. We built rigid RAG pipelines, argued over chunking strategies, and shoved everything into vector databases, hoping it would be enough to give our models context.

But as models have grown smarter, they’ve started to outgrow these rigid structures. The widespread reliance on grep and massive context windows in tools like Cursor isn’t a step backward; it’s a symptom of models demanding frictionless, dynamic access to exactly the data they need, exactly when they need it.

We are entering an era where the concept of a single “long-term memory” database for AI is obsolete. Instead, information retrieval is becoming a fluid, heterogeneous decision tree. And the most profound shift is who gets to navigate that tree.

If we truly believe in the promise of self-replicating software, where agents write code and build tools to solve their own bottlenecks, we have to accept that agents will soon become their own data architects. They will observe the queries they are tasked with answering, provision temporary sandboxes, route complex workflows into specialized heavy-lifters, and write their own migrations as the scope of their work expands.

Our job as human engineers is no longer to build the perfect index. Our job is to build the sandbox.

By focusing on the underlying control plane, the declarative, identity-aware routing layers that handle the mechanical drudgery of ETL and synchronization, we can step out of the critical path. If we provide agents with the right infrastructure building blocks and treat them as first-class developers, we won’t need to guess how to structure their data. They will build exactly the memory they need, query by query.

See Shaped in action

Talk to an engineer about your specific use case — search, recommendations, or feed ranking.